Why the Anthropic-Pentagon rupture is not corporate defiance

The February 2026 AI governance standoff

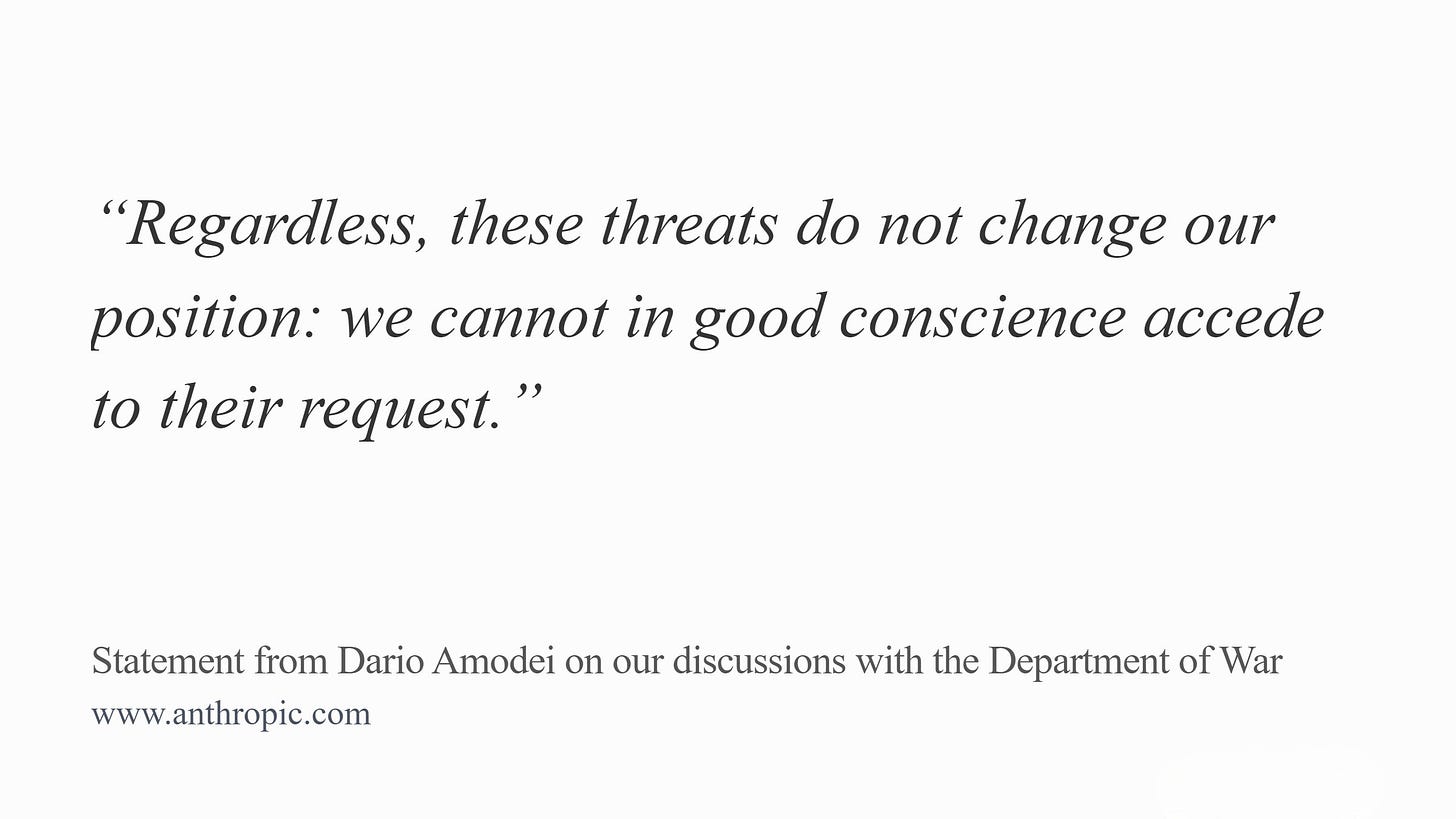

On February 27, 2026, the Trump administration designated Anthropic — the American AI company behind Claude — a “Supply-Chain Risk to National Security.” The designation was triggered by Anthropic’s refusal to remove two safeguards from its $200 million Pentagon contract: one barring the use of its AI for mass domestic surveillance of Americans, and one barring fully autonomous weapons systems operating without human oversight.

To grasp what this means, the designation itself deserves examination.

“Supply chain risk” is a legal mechanism created by the Federal Acquisition Supply Chain Security Act of 2018, enforced through 10 U.S.C. § 3252 and Federal Acquisition Regulation clause 52.204-30. The statute defines supply chain risk in expansive terms. It covers any attempt by an adversary to sabotage a federal national security system, introduce malicious or unwanted functionality, or subvert that system's design, integrity, manufacturing, production, distribution, installation, operation, or maintenance. To apply this designation to Anthropic — for simply exercising lawful contractual discretion — is to treat an American company as a foreign actor attempting to compromise US defense systems. And that is the violence of the designation, because it is coercion as method, intimidation as message, vindictiveness as motive.

The Federal Acquisition Supply Chain Security Act was enacted in 2018. Seven years passed before the first order was issued, in September 2025, against a Swiss cybersecurity firm with alleged ties to Russia. Anthropic, in February 2026, became the second company ever designated under this authority, and the first American company in the statute’s history.

The Department of Defense invoked two legal authorities to justify the designation. Both are narrow tools. The first allows the Secretary of Defense to exclude a company from defense contracts. The second is FASCSA itself, which targets supply chain risks. Secretary Hegseth, however, went further on television. He claimed that no company holding any military contract could do business with Anthropic — at all. That is a much broader ban than either law allows.

That kind of total ban is not invented out of nowhere. It exists in US law, but in a completely different statute, written for a completely different kind of threat. In 2019, Congress passed Section 889 of the National Defense Authorization Act to block the Chinese telecommunications giant Huawei from American infrastructure, on the grounds that its equipment — particularly the hardware used to build 5G mobile networks — could allow the Chinese government to spy on American communications. What made the statute remarkable was its reach. Unlike ordinary procurement law, which governs only what companies sell to the federal government, this provision extended into their entire operations: any contractor doing business with the United States had to strip Huawei equipment from every corner of its commercial work, federal or otherwise. The law followed the perceived threat across the line between public and private.

That was a statute written for a foreign company suspected of state-sponsored espionage. The law the Pentagon used against Anthropic was FASCSA, which does not reach a company’s commercial operations at all.

Secretary Hegseth went further still. On television, he claimed that no company holding any military contract could do business with Anthropic — at all. What Hegseth said in public was broader than what the law itself allows.

Within its actual statutory scope, the designation still carries substantial weight. Every federal contractor performing military work must now determine whether Anthropic appears anywhere in their supply chain, report it, and remove it. The action does not merely sever its own relationship with Anthropic; it ripples through the entire federal contracting ecosystem.

The Pentagon took a tool designed to exclude foreign adversaries who might sabotage US defense systems, applied it for the first time to an American company, and used it not in response to any security threat but in response to Anthropic’s refusal to cross two specific ethical lines.

Anthropic was already serving the United States across the military, the intelligence community, and classified defense networks. The two applications it declined to enable — domestic mass surveillance of Americans and fully autonomous lethal weapons — are not refusals of service to the country. They are practices that the Fourth Amendment, existing federal statutes, and the absence of any Congressional authorization for autonomous lethal force do not permit. The judgment is not Anthropic’s. The judgment is the country’s own legal framework, applied to capabilities it was never written to anticipate.

Within hours of the standoff hitting the news, OpenAI secured its own Pentagon deal for classified networks, reportedly under terms that included the same two safety provisions Anthropic had been punished for defending. The same restrictions were accepted from one company and held against the other. The apparent contradiction was striking, but the public discourse that followed missed the deeper structural issue entirely.

Media coverage, government rhetoric, and public debate converged on a single framing: should a private company or the federal government decide how military AI is used? This framing is not merely reductive. It is dangerous, because it erases the institution that should be making these decisions: the United States Congress.

The Binary That Obscures the Real Question—

The dominant narrative presented the breakdown as a confrontation between corporate power and executive authority — tech billionaires versus the Pentagon. A CBS interview with Anthropic CEO Dario Amodei on the evening of February 28 illustrated this pattern. The interviewer pressed repeatedly on a single axis: “Why should a private company have more say than the Department of Defense?” “Why should Americans trust a CEO over the federal government?”

The questions may sound reasonable until one examines what was actually said. Across a thirty-minute interview, Amodei made the same point at least three times — he does not believe a private company should hold this authority permanently. He explicitly stated that Congress needs to set guardrails for military AI — particularly in areas where the technology has outpaced existing law. He described the current situation as untenable and called for legislative action. Yet, the interviewer did not pursue this line. The interview remained locked on a binary — corporate versus government — excluding the democratic process altogether. In other words, the repetition was not exploring but enforcing a frame.

“This is not tenable in the long term. I don’t think the right long-term solution is for a private company and the Pentagon to argue about this. Congress needs to act here. But Congress doesn’t move fast. So in the meantime, we need to draw a line in the sand.”

— Dario Amodei

When the public conversation about AI regulation is reduced to a power struggle between two actors — both operating without legislative mandate in this specific domain — the possibility of democratic oversight disappears from the discourse. And when it disappears from the discourse, it disappears from the political agenda.

Two Red Lines, Two Legislative Gaps—

Anthropic’s two restrictions are not arbitrary corporate preferences. They correspond to two areas where AI capabilities have overtaken the legal framework.

As Amodei detailed in his February 26 statement, current law allows the government to purchase detailed records of Americans’ movements, web browsing, and associations from commercial sources without a warrant — a practice the Intelligence Community itself has flagged as a privacy risk and one that has prompted bipartisan pushback in Congress. The practice is not illegal; it was simply not operationally useful before the era of large language models and advanced analytics. AI, however, changes the scope: powerful models can assemble scattered, individually innocuous data points into a comprehensive portrait of a person’s life, automatically and at massive scale. And the Fourth Amendment doctrine, along with the statutes Congress has enacted, has not caught up to what is now technically possible. This same legal obsolescence extends to battlefields. Anthropic’s concern is not the partially autonomous systems already in use today. It is fully autonomous weapons — systems that identify, select, and engage targets without any human involvement.

Amodei argued, with technical specificity, that current AI systems are not reliable enough for this application, citing the fundamental unpredictability that anyone working with these models recognizes. Beyond reliability, accountability itself is at stake: if a fleet of coordinated autonomous systems operates under a single command node, the traditional hierarchy of military accountability — built on the assumption that human soldiers exercise judgment at multiple levels — collapses.

Neither of these concerns is ideological. Both are structural. And both point to the same conclusion that legislation has not kept pace with capability.

The Precedent Problem—

Anthropic proposed to work directly with the Department of Defense on R&D to prototype these systems in a controlled environment. The Pentagon declined unless it could deploy without restrictions from the outset.

The administration’s response to Anthropic’s position set a precedent that extends well beyond AI policy. The “supply chain risk” designation was deployed against an American company for exercising contractual discretion, and the message to every technology firm doing business with the federal government was unmistakable: compliance is not negotiated; it is compelled.

Here, the internal contradiction of the government’s own position deserves emphasis. It simultaneously threatened two actions that are logically incompatible: designating Anthropic a supply chain risk (labeling the company a security threat to be excluded) and invoking the Defense Production Act to compel continued service (labeling the company’s technology as essential to national security). A company cannot be both a threat to be quarantined and an asset to be conscripted. The coexistence of these two threats reveals that neither was grounded in a genuine security assessment, and that both were instruments of coercion.

The punitive character of the action, however — and this must be registered — is further underscored by Anthropic’s broader conduct. This is a company that voluntarily forfeited several hundred million dollars in revenue by cutting off access to firms linked to the Chinese Communist Party (CCP), some of which had been designated by the Department of Defense as Chinese Military Companies. It likewise shut down CCP-sponsored cyberattacks targeting its systems and advocated for strong export controls on AI chips to maintain a democratic advantage. Whatever one thinks of Anthropic’s red lines, the suggestion that this company is a threat to American national security is not supported by its record.

If the precedent holds that a principled disagreement over two narrow use cases — representing, by Anthropic’s account, roughly one percent of deployed applications — can trigger a national security designation, then the space for any company to maintain safety restrictions narrows to zero.

Disagreeing with the government is the most American thing in the world and we are patriots in everything we have done here. We have stood up for the values of this country.

— Dario Amodei

What Congressional Action Could Look Like—

The policy vacuum at the center of this dispute is not abstract.

First, the data broker loophole: a statutory framework regulating government purchase and AI-enabled analysis of commercially collected personal data would close the surveillance gap that both Anthropic and bipartisan voices in Congress have identified. Several proposals have addressed this gap in recent sessions, including the Fourth Amendment Is Not For Sale Act, the Government Surveillance Reform Act, and the American Data Privacy and Protection Act. None have advanced to a vote.

Second, autonomous weapons oversight: a statutory requirement for meaningful human control in lethal autonomous systems, with defined thresholds for what constitutes “meaningful,” would establish the accountability framework that both Amodei and a broad coalition of legal and military ethical scholars have called for.

Third, the weaponization of supply chain designations: Congressional review mechanisms for the application of national security designations to domestic companies — particularly where the designation appears retaliatory rather than protective — would prevent the tool from being used as a coercive instrument against lawful commercial actors.

None of these proposals are novel. What is missing is a Congress equipped to address both the technical and governance dimensions of AI, and a public discourse that demands such engagement rather than treating lawmakers as bystanders.

The Democratic Deficit in AI Governance—

The administration’s action against Anthropic will likely be remembered as the moment AI governance became a national security flashpoint. The most damning indictment of this affair, though, is not what played out in public. It is what the public discourse refused to engage with: a legislative framework that renders these confrontations unnecessary.